Reading through the Online Journalism blog post on Getting full addresses for data from an FOI response (using APIs), the following phrase – relating to the composition of some Google Refine code to parse a JSON string from the Google geocoding API – jumped out at me: “This took a bit of trial and error…”

Why? Two reasons… Firstly, because it demonstrates a “have a go” attitude which you absolutely need to have if you’re going to appropriate technology and turn it to your own purposes. Secondly, because it maybe (or maybe not…) hints at a missed trick or two…

So what trick’s missing?

Here’s an example of the sort of thing you get back from the Google Geocoder:

{ “status”: “OK”, “results”: [ { “types”: [ “postal_code” ], “formatted_address”: “Milton Keynes, Buckinghamshire MK7 6AA, UK”, “address_components”: [ { “long_name”: “MK7 6AA”, “short_name”: “MK7 6AA”, “types”: [ “postal_code” ] }, { “long_name”: “Milton Keynes”, “short_name”: “Milton Keynes”, “types”: [ “locality”, “political” ] }, { “long_name”: “Buckinghamshire”, “short_name”: “Buckinghamshire”, “types”: [ “administrative_area_level_2”, “political” ] }, { “long_name”: “Milton Keynes”, “short_name”: “Milton Keynes”, “types”: [ “administrative_area_level_2”, “political” ] }, { “long_name”: “United Kingdom”, “short_name”: “GB”, “types”: [ “country”, “political” ] }, { “long_name”: “MK7″, “short_name”: “MK7″, “types”: [ “postal_code_prefix”, “postal_code” ] } ], “geometry”: { “location”: { “lat”: 52.0249136, “lng”: -0.7097474 }, “location_type”: “APPROXIMATE”, “viewport”: { “southwest”: { “lat”: 52.0193722, “lng”: -0.7161451 }, “northeast”: { “lat”: 52.0300728, “lng”: -0.6977000 } }, “bounds”: { “southwest”: { “lat”: 52.0193722, “lng”: -0.7161451 }, “northeast”: { “lat”: 52.0300728, “lng”: -0.6977000 } } } } ] }

The data represents a Javascript object (JSON = JavaScript Object Notation) and as such has a standard form, a hierarchical form.

Here’s another way of writing the same object code, only this time laid out in a way that reveals the structure of the object:

{

"status": "OK",

"results": [ {

"types": [ "postal_code" ],

"formatted_address": "Milton Keynes, Buckinghamshire MK7 6AA, UK",

"address_components": [ {

"long_name": "MK7 6AA",

"short_name": "MK7 6AA",

"types": [ "postal_code" ]

}, {

"long_name": "Milton Keynes",

"short_name": "Milton Keynes",

"types": [ "locality", "political" ]

}, {

"long_name": "Buckinghamshire",

"short_name": "Buckinghamshire",

"types": [ "administrative_area_level_2", "political" ]

}, {

"long_name": "Milton Keynes",

"short_name": "Milton Keynes",

"types": [ "administrative_area_level_2", "political" ]

}, {

"long_name": "United Kingdom",

"short_name": "GB",

"types": [ "country", "political" ]

}, {

"long_name": "MK7",

"short_name": "MK7",

"types": [ "postal_code_prefix", "postal_code" ]

} ],

"geometry": {

"location": {

"lat": 52.0249136,

"lng": -0.7097474

},

"location_type": "APPROXIMATE",

"viewport": {

"southwest": {

"lat": 52.0193722,

"lng": -0.7161451

},

"northeast": {

"lat": 52.0300728,

"lng": -0.6977000

}

},

"bounds": {

"southwest": {

"lat": 52.0193722,

"lng": -0.7161451

},

"northeast": {

"lat": 52.0300728,

"lng": -0.6977000

}

}

}

} ]

}

Making Sense of the Notation

At its simplest, the structure has the form: {“attribute”:”value”}

If we parse this object into the jsonObject, we can access the value of the attribute as jsonObject.attribute or jsonObject[“attribute”]. The first style of notation is called a dot notation.

We can add more attribute:value pairs into the object by separating them with commas: a={“attr”:”val”,”attr2″:”val2″} and address them (that is, refer to them) uniquely: a.attr, for example, or a[“attr2”].

Try it out for yourself… Copy and past the following into your browser address bar (where the URL goes) and hit return (i.e. “go to” that “location”):

javascript:a={"attr":"val","attr2":"val2"}; alert(a.attr);alert(a["attr2"])

(As an aside, what might you learn from this? Firstly, you can “run” javascript in the browser via the location bar. Secondly, the javascript command alert() pops up an alert box:-)

Note that the value of an attribute might be another object.

obj={ attrWithObjectValue: { “childObjAttr”:”foo” } }

Another thing we can see in the Google geocoder JSON code are square brackets. These define an array (one might also think of it as an ordered list). Items in the list are address numerically. So for example, given:

arr[ “item1”, “item2”, “item3” ]

we can locate “item1″ as arr[0] and “item3″ as arr[2]. (Note: the index count in the square brackets starts at 0.) Try it in the browser… (for example, javascript:list=["apples","bananas","pears"]; alert( list[1] );).

Arrays can contain objects too:

list=[ “item1”, {“innerObjectAttr”:”innerObjVal” } ]

Can you guess how to get to the innerObjVal? Try this in the browser location bar:

javascript: list=[ "item1", { "innerObjectAttr":"innerObjVal" } ]; alert( list[1].innerObjectAttr )

Making Life Easier

Hopefully, you’ll now have a sense that there’s structure in a JSON object, and that that (sic) structure is what we rely on if we want to cut down on the “trial an error” when parsing such things. To make life easier, we can also use “tree widgets” to display the hierarchical JSON object in a way that makes it far easier to see how to construct the dotted path that leads to the data value we want.

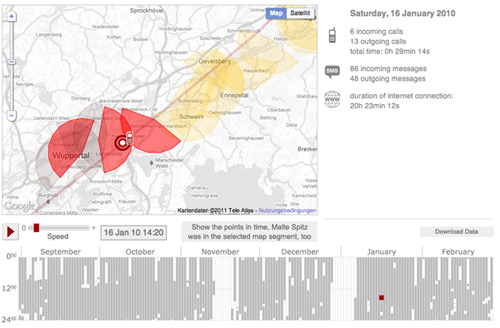

A tool I have appropriated for previewing JSON objects is Yahoo Pipes. Rather than necessarily using Pipes to build anything, I simply make use of it as a JSON viewer, loading JSON into the pipe from a URL via the Fetch Data block, and then previewing the result:

Another tool (and one I’ve just discovered) is an Air application called JSON-Pad. You can paste in JSON code, or pull it in from a URL, and then preview it again via a tree widget:

Clicking on one of the results in the tree widget provides a crib to the path…

Summary

Getting to grips with writing addresses into JSON objects helps if you have some idea of the structure of a JSON object. Tree viewers make the structure of an object explicit. By walking down the tree to the part of it you want, and “dotting” together* the nodes/attributes you select as you do so, you can quickly and easily construct the path you need.

* If the JSON attributes have spaces or non-alphanumeric characters in them, use the obj[“attr”] notation rather than the dotted obj.attr notation…

PS Via my feeds today, though something I had bookmarked already, this Data Converter tool may be helpful in going the other way… (Disclaimer: I haven’t tried using it…)

If you know of any other related tools, please feel free to post a link to them in the comments:-)