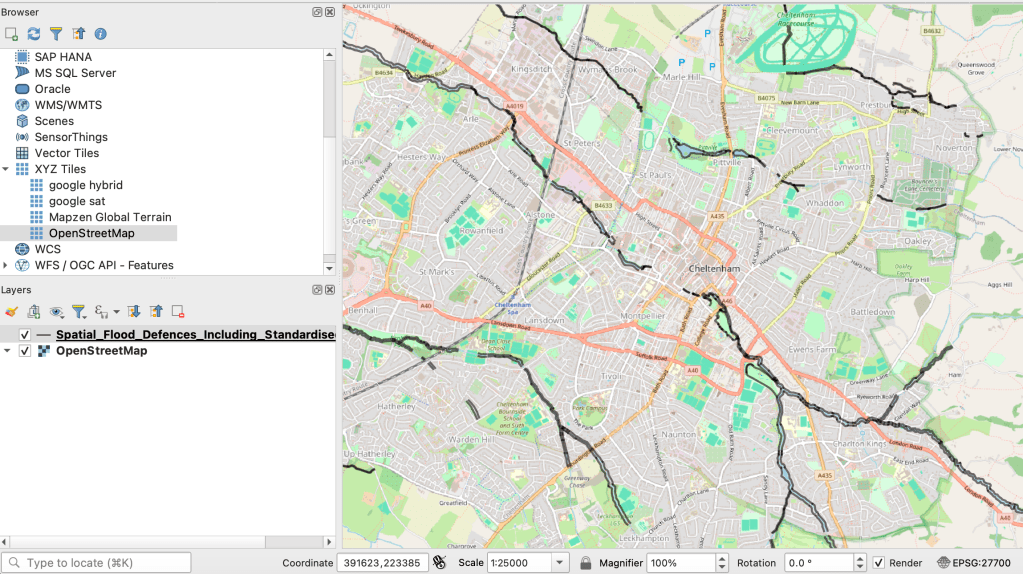

At this year’s Dataharvest I delivered a workshop on using Python in QGIS to automate the process of exporting maps for multiple locations. Here’s how to do it (you can find a GitHub repository with materials and links here).

Making a map for a story is cool — but what if you could make a map for every reader? Or if you’re working on a project involving teams in different regions or countries, what if you could give each one of those teams a map centred on their own patch?

Normally you would have to manually move the map to centre it on a key city, and then export an image. Then do it again and again and again for every area.

Luckily, QGIS has the ability to run code. And this is a great excuse to start using it.