(Punchy title, eh?!) If you’re a researcher interested in local government initiatives or service provision across the UK on a particular theme, such as air quality, or you’re looking to start pulling together an aggregator of local council consultation exercises, where would you start?

Really – where would you start? (Please post a comment saying how you’d make a start on this before reading the rest of this post… then we can compare notes;-)

My first thought would be to use a web search engine and search for the topic term using a site:gov.uk search limit, maybe along with intitle:council, or at least council. This would generate a list of pages on (hopefully) local gov websites relating to the topic or service I was interested in. That approach is a bit hit or miss though, so next up I’d probably go to DirectGov, or the new gov.uk site, to see if they had a single page on the corresponding resource area that linked to appropriate pages on the various local council websites. (The gov.uk site takes a different approach to the old DirectGov site, I think, trying to find a single page for a particular council given your location rather than providing a link for each council to a corresponding service page?) If I was still stuck, OpenlyLocal, the site set up several years ago by Chris Taggart/@countculture to provide a single point of reference for looking up common adminsitrivia details relating to local councils, would be the next thing that came to mind. For a data related query, I would probably have a trawl around data.gov.uk, the centralised (but far form complete) UK index of open public datasets.

How much more convenient it would be if there was a “vertical” search or resource site relating to just the topic or service you were interested in, that aggregated relevant content from across the UK’s local council websites in a single place.

(Erm… or maybe it wouldn’t?!)

Anyway, here are a few notes for how we might go about constructing just such a thing out of two key ingredients. The first ingredient is the rather wonderful Local directgov services list:

This dataset is held on the Local Directgov platform which provides the deep links into Local council websites for a number of services in Directgov. The Local Authority Service details holds the local council URLS for over 240 services where the customer can directly transfer to the appropriate service page on any council in England.

The date on the dataset post is 16/09/2011, although I’m not sure if the data file itself is more current (which is one of the issues with data.gov.uk, you could argue…). Presumably, gov.uk runs off a current version of the index? (Share…. 😉 Each item in the local directgov services list carries with it a service identifier code that describes the local government service or provision associated with the corresponding web page. That it, each URL has associated with it a piece of metadata identifying a service or provision type.

Which leads to the second ingredient: the esd standards Local Government Service List. This list maps service codes onto a short key phrase description of the corresponding service. So for example, Council – consultation and community engagement is has service identifier 366, and Pollution control – air quality is 413. (See the standards page for the actual code/vocabulary list in a variety of formats…)

As a starter for ten, I’ve pulled the Directgov local gov URL listing and local gov service list into scraperwiki (Local Gov Web Pages). Using the corresponding scraper API, we can easily run a query looking up service codes relating to pollution, for example:

select * from `serviceDesc` where ToName like '%pollution%'

From this, we can pick up what service code we need to use to look up pages related to that service (413 in the case of air pollution):

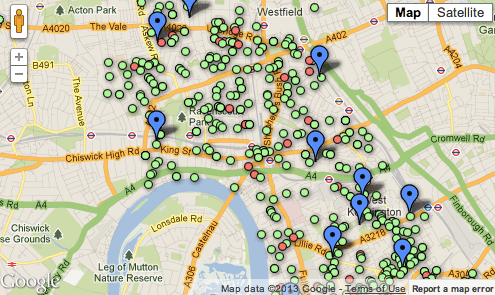

select * from `localgovpages` where LGSL=413

We can also get a link to an HTML table (or JSON representation, etc) of the data via a hackable URI:

https://api.scraperwiki.com/api/1.0/datastore/sqlite?format=htmltable&name=local_gov_web_pages&query=select%20*%20from%20%60localgovpages%60%20where%20LGSL%20%3D413

(Hackable in the sense we can easily change the service code to generate the table for the service with that code.)

So that’s the starter for 10. The next step that comes to my mind is to generate a dynamic Google custom search engine configuration file that defines a search engine that will search over just those URLs (or maybe those URLs plus the pages they link to). This would then provide the ability to generate custom search engines on the fly that searched over particular service pages from across localgov in a single, dynamically generated vertical.

A second thought is to grab those page, index them myself, crawl them/scrape them to find the pages they link to, and index those pages also (using something like tf-idf within each local council site to identify and remove common template elements from the index). (Hmmm… that could be an interesting complement to scraperwiki… SolrWiki, a site for compiling lists of links, indexing them, crawling them to depth N, and then configuring search ranking algorithms over the top of them… Hmmm… It’s a slightly different approach to generating custom search engines as a subset of a monolithic index, which is how the Google CSE and (previously) the Yahoo BOSS engines worked… Not scaleable, of course, but probably okay for small index engines and low thousands of search engines?)