Nas minhas aulas e treinamentos de jornalismo de dados, costumo falar sobre os tipos mais comuns de histórias que podem ser encontradas em bancos de dados. Então, selecionei 100 reportagens baseadas em dados, analisei-as e verifiquei com qual frequência cada um desses ângulos é utilizado.

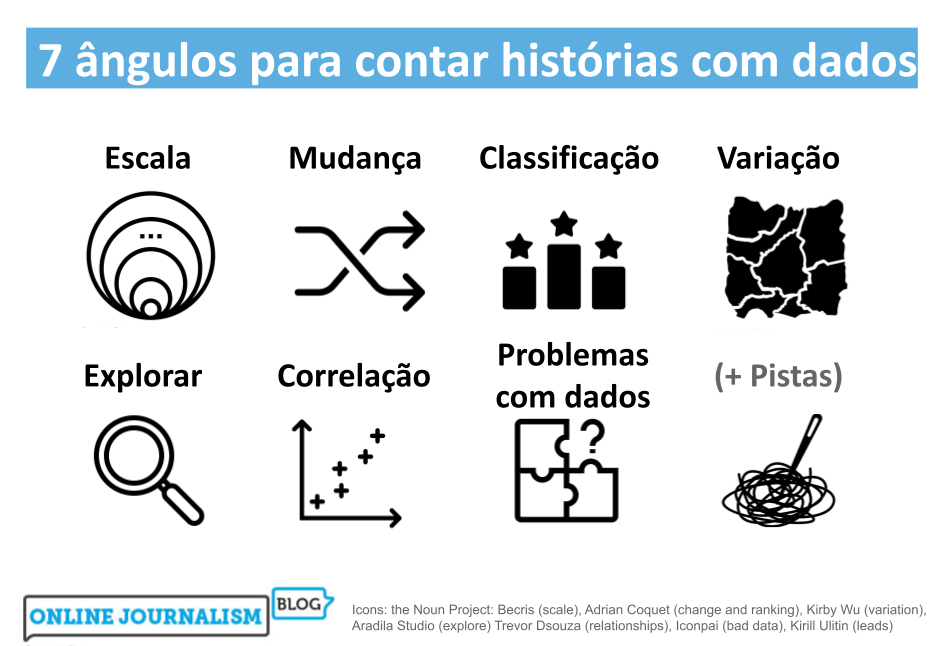

Cheguei à conclusão de que, na verdade, existem sete ângulos principais para reportagens e histórias baseadas em dados. Muitas histórias incorporam outros ângulos como dimensões secundárias da narrativa (uma história de mudança pode passar a falar sobre a escala de algo, por exemplo), mas todas as histórias de jornalismo de dados que examinei levaram um desses ângulos como fio-condutor.

Neste post, examino como os sete ângulos mais comuns podem ajudar você a ter ideias para histórias e reportagens, assim como a variedade de execuções e as principais considerações para se ter em mente.