In 2007 Bill Kovach and Tom Rosenstiel published The Elements of Journalism. With the concept of ‘journalism’ increasingly challenged by the fact that anyone could now publish to mass audiences, their principles represented a welcome platform-neutral attempt to articulate exactly how journalism could be untangled from the vehicles that carried it and the audiences it commanded.

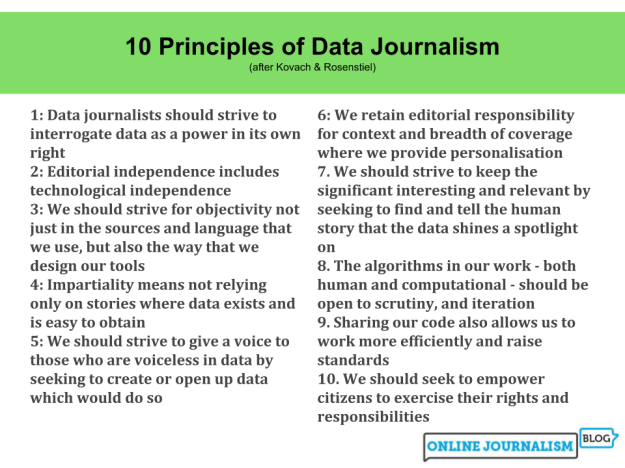

In this extract from a forthcoming book chapter* I attempt to use Kovach and Rosenstiel’s principles (outlined in part 1 here) as the basis for a set that might form a basis for (modern) data journalism as it enters its second and third decades.

Principle 1: Data journalists should strive to interrogate data as a power in its own right

When data journalist Jean-Marc Manach set out to find out how many people had died while migrating to Europe he discovered that no EU member state held any data on migrants’ deaths. As one public official put it, dead migrants “aren’t migrating anymore, so why care?”

Similarly, when the BBC sent Freedom of Information requests to mental health trusts about their use of face-down restraint, six replied saying they could not say how often any form of restraint was used — despite being statutorily obliged to “document and review every episode of physical restraint which should include a detailed account of the restraint” under the Mental Health Act 1983.

The collection of data, the definitions used, and the ways that data informs decision making, are all exercises of power in their own right. The availability, accuracy and employment should all be particular focuses for data journalism as we see the expansion of smart cities and wearable technology. Continue reading