Following my previous post about the rise of liveblogging, I wanted to provide a simple list of ideas for student journalists wanting to get some liveblogging experience. Some people assume that you need to wait for a big news event to start a liveblog, but the format has proved particularly flexible in serving a whole range of editorial demands. Here are just a few:

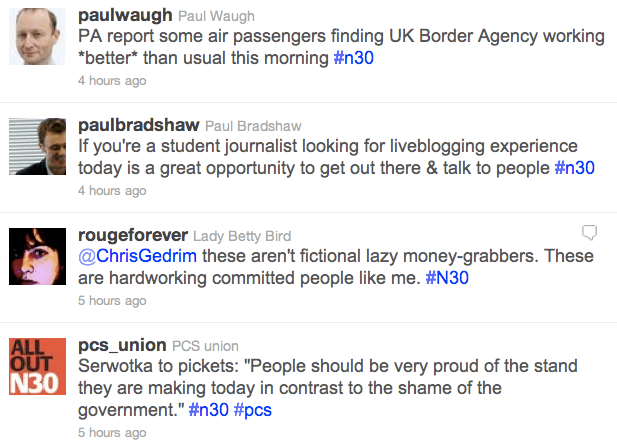

1. A protest or demonstration

Let’s start with the obvious one. Protests and demonstrations are normally planned and announced in advance, so use a tool like Google Alerts to receive emails when the terms are mentioned, as well as following local campaigning groups and local branches of national campaigns. Issues to consider:

- There will be conflicting versions of events so seek to verify as much as possible – from both demonstrators and police, and any other parties, such as counter-demonstrations.

- Know as many key facts ahead of time as possible to be able to contextualise any claims from any side. Have links to hand – Delicious is particularly useful as a way of organising these.

- Make contacts ahead of the event to find out who will be recording it and how those records will be published (e.g. livestream, YouTube, Flickr, Google Maps etc). Make sure you have mobile phone numbers in your contacts book and are following those people on the relevant social network. Try to anticipate where you will be needed most – where will the gaps in coverage be?

- Don’t just cover the event on the day – build up to it and plan for the aftermath. Walk round the route to plan for the event – and post a photoblog while you’re at it. Interview key participants for profiles while you make contact. Join online forums and Facebook groups and engage with discussions on key issues.

- Summarise regularly to help those just joining find their feet (thanks to Ed Walker in the comments for this one – more tips in his blog post on liveblogging)

2. An industry conference

Whether you’re reporting on a particular location or a shared interest there will be industries that play a key role in that. And industries have conferences. Use a quick Google search or some of the specialist events listing and organisation services like Exhibitions.co.uk to find them.

Issues to consider:

- Industries have jargon. Try to familiarise yourself with that ahead of time (follow the specialist press and key figures on social media) or you’ll mis-hear key words and phrases.

- There are often different events happening at the same time. Plan your schedule so you know where your priorities are.

- Don’t follow the crowd. Often you will add more value by missing a session in order to conduct an interview or post some deeper analysis. This will also require preparation: organise to meet key individuals ahead of time; read up on the key issues.

- As above, you’ll also need to know what’s going to be covered well and who’s going to be publishing online at the event. Build-ups will also be useful.

3. A meeting

Council or board meetings, hearings, committees and other public and semi-public meetings often have significant implications for local communities, sections of society or particular industries. They are also often poorly covered. This provides a real opportunity for enterprising individuals to add value to their readership.

In addition, there are more informal meetings of small groups which you can find on sites such as Upcoming and Eventbrite.

Issues to consider:

- These meetings can easily pass under the radar so make sure you know when they’re taking place. For council meetings, Openly Local’s listings are particularly useful.

- Many meetings have to publish their minutes – keep up to date with these (ask for them if you have to – use the Freedom of Information Act if you cannot get them any other way) so you know the background.

- Know who’s who – and make sure you know which is which. Write down their names and where they’re sitting so you can attribute quotes correctly.

- Prepare for nothing much to happen, most of the time. Concentration is key: newsworthy nuggets will be hidden in dull proceedings – and they won’t be clearly signposted. One advantage of liveblogging is that others can bring your attention to issues you might miss in the flow of reporting.

4. The build up to an event

Anticipation of an event can be an event in itself. The Birmingham Mail’s Friday afternoon liveblogs previewing the weekend’s football fixture are a particularly successful example of this. Really, this is a live chat, with the liveblog format providing the editorial urgency to give it a news twist.

Issues to consider:

- Have prompts ready to get things started and inject new momentum when conversation dries up – prepare as you would for an interview, only with 100 possible interviewees.

- Anticipate the main questions and have key facts and links to hand.

- Get the tone right: can you have a bit of banter? It might be worth preparing a joke or two, or looking for opportunities to make them.

5. Breaking news

While you cannot plan for the exact timing of breaking news, you can prepare for some news events. At the most basic level, you should know how to quickly launch a liveblog once you know you need to do so. Other issues to consider:

- Organise your day-to-day newsgathering so that it is as easy as possible to access relevant information quickly. Once again, Delicious is a good tool in this regard for quickly accessing your own personal archive on any particular subject.

- There will be particular events – the deaths of celebrities, for example – that you can prepare for. How much effort you spend on this depends on how likely you think the event is, and how important it is to your audience.

- Breaking news is an adrenaline rush of anticipation fed by an unhealthy diet of reaction, rumour and conjecture – only punctuated occasionally by bursts of actual news. Be aware of the dangers of conjecture and the value in debunking rumours, and try to avoid making things worse.

6. Your own journey

You don’t need someone else to organise something for you to start a liveblog: you can do something yourself, and liveblog your progress. Considerations:

- Ideally it should be something with a beginning, a middle and an end over a limited period of time: running a marathon, for example (if you can hold the mobile phone), or collecting 1,000 signatures for a campaign.

- It should also involve others: the liveblog format lends itself to outside contributions.

- You’ll have to work harder to make it interesting, so don’t update unless something has changed, and prepare material so you have interesting things to fill the gaps with.

7. A press conference

A familiar sight on 24 hour news channels, press conferences are an obvious candidate for liveblog treatment. You can also add to this similar political events such as the Budget, debates, or Prime Minister’s Questions. The main consideration is that you will be covering the conference alongside other journalists, so your coverage needs to be distinctive. Here are some things to consider:

- Controlled as they are, press conferences don’t often generate a constant supply of newsworthy quotes, so when a spokesperson is trotting out platitudes or steering questions back to the particular angle she wants to sell, tell us about other things going on in the room: how is the journalist reacting? What is the PR rep doing?

- If the situation is likely to be tightly controlled, you have a better chance of predicting what will be said, and to prepare for that. In particular, if a person is going to try to ‘spin’ facts in a particular direction, have the facts and evidence ready to ‘unspin’ them – as always, including links.

- If you want to use one of your question opportunities to give your audience a voice, do so.

- Likewise, tap into the wit and intelligence of users to liveblog their reactions outside the room to the questions and answers being exchanged inside.

8. A staged event

A liveblog is an obvious choice for a live event, and there are plenty of sporting and cultural events to cover. The obvious candidates – football matches, popular Olympic events – should be avoided, as existing and live coverage will be more than sufficient, so look to less well-covered sports, concerts, performances, fashion shows, exhibitions and other events. Think about:

- Be aware of rights deals and other restrictions. Live coverages of certain popular sports, such as Premiership football, may be limited. There may be restrictions on taking photographs of cultural events, or recording audio or video at a music event.

- As with meetings (above) it’s crucial to know who’s who and have a crib sheet of related facts.

- Be descriptive and engage the senses. Tell us about the atmosphere, smells, sounds, and other elements that make people feel like they’re there.

9. A launch or opening

Product launches and store opening can be very dull affairs, but occasionally generate significant interest – particularly among technology and fashion fans. The interest doesn’t generally make for a sustained news event, so your liveblog is likely to be use that interest as the basis for some broader editorial angles. The tips on a ‘build up to an event’ above, apply again here, as that is essentially what this is, with the following differences:

- Launches and openings are social gatherings, so try focusing on the people there: interview them, paint a picture of how diverse or similar they are. Tap into their expertise or enthusiasm; work with them.

- Think about what people might want to know after the launch/opening: tips and tricks on using new technology? The items that are flying off the shelves? Have experts and inside sources on call.

10. Add your own here

Like blogging generally, liveblogs are just a platform, with the flexibility to adapt to a range of circumstances. If Popjustice can liveblog “Things we can learn from Greg James’ interview with Lady Gaga” then you can liveblog anything. If you’ve used them for a purpose not listed here, please let me know and I’ll add it to the list.

Likewise, if you have any tips to add from your own experiences of covering events, please add them in the comments.

UPDATE (November 2014): The Birmingham Mail used liveblogging to commemorate an anniversary:

“From the morning of Friday November 21, the Mail will be live blogging and live tweeting in ‘real time’ the events of the day, from the stories of those preparing for a night out on the town, to the moment the bomb warning was phoned through to the Post and Mail, to the reaction of the emergency services.”