If you prefer to read me in Spanish or Catalan, the following may be of interest:

Category Archives: online journalism

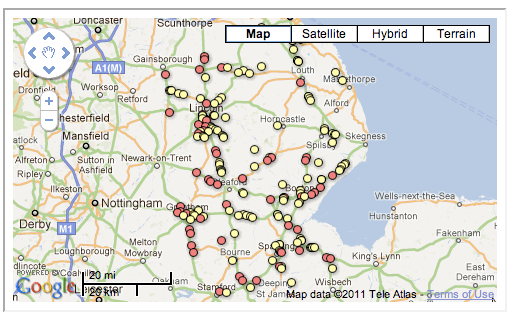

How to: convert easting/northing into lat/long for an interactive map

Google Fusion Tables is great for creating interactive maps from a spreadsheet – but it isn’t too keen on easting and northing. That can be a problem as many government and local authority datasets use easting and northing to describe the geographical position of things – for example, speed cameras.

So you’ll need a way to convert easting and northing into something that Fusion Tables does like – such as latitude and longitude.

Here’s how I did it – quickly. Continue reading

How a musician and a Sikh TV channel dominated coverage of the Birmingham riots

One image from last night guaranteed not to have made it onto the front page - via Birmingham Riots 2011

It’s one thing to cover rioting on the doorstep of the national press – it’s quite another when squeezed regional newsrooms have to do the same. And as rioting in the UK spread from London to Birmingham and then other cities, some unlikely suspects showed how to cover a riot online even when you don’t have a newsroom.

Dominating online coverage in Birmingham was not a local newspaper or broadcaster but a Tumblr site – Birmingham Riots 2011 – set up by musician Casey Rain. Over dozens of entries Casey posted countless reports of what was taking place, and a range of photos and video footage which dwarfed the combined coverage of regional press and broadcast.

Continue reading

Host your own crowdsourced investigation with the Help Me Investigate plugin

When we open-sourced the code for Help Me Investigate the plan was to move from a single site to a decentralised, networked structure. Now, thanks to Andy Dickinson, it has become even easier for anyone to host their own journalism crowdsourcing platform.

Since a conversation a couple of months ago, Andy has been tweaking a WordPress plugin that replicates the functionality of the previous Help Me Investigate site. It’s now ready for use.

The plugin adds an ‘Investigations’ page to your self-hosted WordPress blog which holds ‘sticky’ pages for any investigations you want to pursue, and allows you to break those down into distinct challenges that anyone can contribute to.

You can also add tags and grade progress, and limit access to make an investigation more private. Full functionality and limitations are listed on the plugin page. Continue reading

SFTW: Asking questions of a webpage – and finding out when those answers change

Previously I wrote on how to use the =importXML formula in Google Docs to pull information from an XML page into a conventional spreadsheet. In this Something For The Weekend post I’ll show how to take that formula further to grab information from webpages – and get updates when that information changes.

Asking questions of a webpage – or find out when the answer changes

Despite its name, the =importXML formula can be used to grab information from HTML pages as well. This post on SEO Gadget, for example, gives a series of examples ranging from grabbing information on Twitter users to price information and web analytics (it also has some further guidance on using these techniques, and is well worth a read for that).

Asking questions of webpages typically requires more advanced use of XPath than I outlined previously – and more trial and error.

This is because, while XML is a language designed to provide structure around data, HTML – used as it is for a much wider range of purposes – isn’t quite so tidy.

Finding the structure

To illustrate how you can use =importXML to grab data from a webpage, I’m going to grab data from Gorkana, a job ads site.

If you look at their journalists jobs page, you’ll see all sorts of information, from navigation and ads to feeds and policies. This is how you could grab a specific piece of data from a page, and put it into a table structure, to answer any questions you might have:

Make a note of the first word or phrase in the section you want (e.g. “Senior account executive”) then right-click on the page and select View Source or whatever option allows you to see the HTML code behind the page.

You could scroll through this to try to find the bit you want, but it’s easier to use your search facility to find that key phrase you noted earlier (e.g. “Senior account executive”)

What you’re hoping to find is some sort of div class tag just above that key phrase – and in this case there’s one called div class=”jobWrap”

This means that the creator of the webpage has added some structure to it, wrapping all their job ads in that div.

We just need to write a formula that is equally specific.

Writing the formula

Open up a spreadsheet in Google Docs and write the following formula in cell B1:

=importXML(“http://www.gorkanajobs.co.uk/jobs/journalist/”, “//div[starts-with(@class, ‘jobWrap’)]“)

When you press Enter you should see 3 columns filled with values from that particular part of the webpage: the job title; the package; and a brief description. Now that you have this data in a structured format you could, for example, work out average wages of job ads, or the most common job titles.

But how did that formula work? As I’ve explained most of =importXML in the previous post, I’ll just explain the query part here. So:

//div

is looking for a tag that begins

[starts-with

is specifying that the this div must begin in a particular way, and it does so by grabbing one thing, and looking for another thing within it:

(@class, ‘jobWrap’)

is saying that the div class should contain ‘jobWrap’ (bonus points: the @ sign indicates an attribute; class is an attribute of the div; ‘jobWrap’ is the value of the attribute)

]

…finishes off that test.

Even if you don’t understand the code itself, you can adapt it to your own purposes as long as you can find the right div class tag and replace ‘jobWrap’ with whatever the value is in your case.

It doesn’t even have to be a div class – you could replace //div with other tags such as //p for each paragraph fitting a particular criteria.

You can also replace @class with another attribute, such as @id or @title. It depends on the HTML of the page you’re trying to grab information from.

Where’s the structure come from?

Why has this data been put into 3 columns? The answer is in the HTML again. You’ll see that the job title is between h4 tags. The location and package is within ul tags and the description is within p tags, before each div is closed with the tag /div

But if you keep reading that HTML you’ll also see that after that /div there is some more information within a different div tag: div class=”adBody”. This contains the name of the recruiter and a link to a page where you can apply. There’s also a third div with a link to an image of the recruiter.

You could adapt your importXML formula to grab these instead – or add a new formula in D2 to add this extra information alongside the others (watch out for mismatches where one div may be missing for some reason).

Finding and cleaning links

You’ll notice that the formula above grabs text, but not the links within each job ad. To do that we need to adapt the formula as follows. Try typing this in cell D2:

=ImportXML(“http://www.gorkanajobs.co.uk/jobs/journalist/”, “//div[starts-with(@class, ‘jobWrap’)]//@href”)

This is identical to the previous formula, with one addition at the end:

//@href

What this does is grab the value of any link in the HTML. In other words, the bit after a href=”

You’ll notice that the results are partial URLs, such as /job/3807/senior-account-executive-account-manager/

These are known as relative URLs, because they are relative to the site they are on, but will not work when placed on another site.

This is easily cleaned up. In cell E2 type the following:

=CONCATENATE(“http://www.gorkanajobs.co.uk”,D2)

This creates a new URL beginning with http://www.gorkanajobs.co.uk and ending with the contents of cell D2 – the relative URL. Copy the formula down the column so it works for all cells in column D.

Not always so tidy

Not all websites will be so structured. The more structured the webpage – or the data within it – the better. But you may have to dig into the HTML and/or tweak your formula to find that structure. Or you may have to settle for some rough and ready data that you clean later.

The key advantage of =importXML, however, is how it is able to pull information from HTML into a table that you can then interrogate, with different columns for different parts of that data.

For more help with these processes you can find explanations of how to write expressions in XPath here but be prepared to use trial and error to get the right expression for the question you’re asking. The Vancouver Data Blog offers some specific examples that can be easily adapted.

Getting updates from your spreadsheet

Finally, this can be useful because Google Docs allows you to receive notifications whenever any changes are made, and to publish your spreadsheet as an RSS feed. This is explained in this blog post, which is also the source of the movie above.

And if you want to see all of this in action I’ve published an example spreadsheet demonstrating all the above techniques here.

Two New Cabinet Office Open Data Consultations: Data Policy and Making Open Data Real

Via the Guardian Datablog, I see that the Cabinet Office has just opened up a couple of consultations around open data:

– Consultation on Data Policy for a Public Data Corporation [homepage] [Consultation]

Here are the consultation questions (also available via SurveyMonkey: PDC consultation):

Chapter 4 – Charging for PDC information

- How do you think Government should best balance its objectives around increasing access to data and providing more freely available data for re-use year on year within the constraints of affordability? Please provide evidence to support your answer where possible.

- Are there particular datasets or information that you believe would create particular economic or social benefits if they were available free for use and re-use? Who would these benefit and how? Please provide evidence to support your answer where possible.

- What do you think the impacts of the three options would be for you and/or other groups outlined above? Please provide evidence to support your answer where possible.

- A further variation of any of the options could be to encourage PDC and its constituent parts to make better use of the flexibility to develop commercial data products and services outside of their public task. What do you think the impacts of this might be?

- Are there any alternative options that might balance Government’s objectives which are not covered here? Please provide details and evidence to support your response where possible.

Chapter 5 – Licensing

- To what extent do you agree that there should be greater consistency, clarity and simplicity in the licensing regime adopted by a PDC?

- To what extent do you think each of the options set out would address those issues (or any others)? Please provide evidence to support your comments where possible.

- What do you think the advantages and disadvantages of each of the options would be? Please provide evidence to support your comments

- Will the benefits of changing the models from those in use across Government outweigh the impacts of taking out new or replacement licences?

Chapter 6 – Regulatory oversight

- To what extent is the current regulatory environment appropriate to deliver the vision for a PDC?

- Are there any additional oversight activities needed to deliver the vision for a PDC and if so what are they?

- What would be an appropriate timescale for reviewing a PDC or its constituent parts public task(s)?

And the second consultation (which is probably worth reading in the context of the http://www.cabinetoffice.gov.uk/resource-library/open-public-services-white-paper [white paper PDF, feedback website]?)

– Making Open Data Real: A Public Consultation [homepage] [Consultation]

- Glossary of key terms [link]

- An enhanced right to data: how do we establish stronger rights for individuals, businesses and other actors to obtain, use and re-use data from public service providers? [link]

- Setting transparency standards: what would standards that enforce this right to data among public authorities look like? [link]

- Corporate and personal responsibility: how would public service providers be held to account for delivering open data through a clear governance and leadership framework at political, organisational and individual level? [link]

- Meaningful Open Data: how should we ensure collection and publication of the most useful data, through an approach enabling public service providers to understand the value of the data they hold and helps the public at large know what data is collected? [link]

- Government sets the example: in what ways could we make the internal workings of government and the public sector as open as possible? [link]

- Innovation with Open Data: to what extent is there a role for government to stimulate enterprise and market making in the use of open data? [link]

I haven’t had chance to read through the consultation docs yet, but I’ll try and comment somewhere, as well as responding…

The way the consultations are presented

As to the way the consultations are presented themselves, two approaches have been taken:

– the PDC consultation embeds documenents at chapter level hosted on Scribd in a preview widget, with questions made available via a Word document or via SurveyMonkey. There doesn’t appear to be an opportunity to comment on the BIS site that is hosting the PDC consultation, even though it’s a WordPress platform running the Commentariat2 theme. To my mind, the way this consultation has be published, it’s not really of the web, and, to use a technical term, feels a little bit horrible to me… Maybe they don’t want flame wars on the bis.gov.uk domain about “Charging for PDC information”?!;-)

– the Making it Real consultation is hosted on the data.gov.uk site, with HTML text split at “chapter” (section) level, and commenting at that level via a single bottom of the page comment box. Where documents take close reading, I think this makes commenting difficult: if you want to refer to specific, detailed points in the consultation document, I’d say it makes sense to be able to see comment at the point of reference. That is, the comment box needs to be where you can see the actual bit of text you are commenting on (which is one reason why we often annotate documents with marginalia, rather than on a separate piece of paper). Where the comment box is fixed at the bottom of the page, you need two windows open to have side by side commenting and viewing of the actual text you are commenting on.

If we hadn’t decided that things had moved on enough in the way consultations were being handled to close WriteToReply (WriteToReply is closing. Come get your data if you want it), I think there’s a good chance we would have hosted both these consultations… Maybe our thinking that WriteToReply had nudged things far enough was a bit hasty? (The digress.it theme is out there, but as yet hasn’t been trialled on a departmental basis, I don’t think, even though we did try to respond to the commissioned accessibility audit. (Are Scribd docs accessible?) Digress.it is running on the JISCPress site though.

(I’m suddenly fired up again by the thought that consultation docs could be so much more “of the web” as well as easier to engage with… Hmmm, when’s the closing date for these consultations? Maybe there is time for one last WriteToReply outing…?)

PS How did I miss out on subscribing to the Government Digital Service? e.g. Neil Williams on A vision for online consultation and policy engagement

Merging Two Different Datasets Containing a Common Column With R and R-Studio

Another way for the database challenged (such as myself!) for merging two datasets that share at least one common column…

This recipe using the cross-platform stats analysis package, R. I use R via the R-Studio client, which provides an IDE wrapper around the R environment.

So for example, here’s how to merge a couple of files sharing elements in a common column…

First, load in your two data files – for example, I’m going to load in separate files that contain qualifying and race stats from the last Grand Prix:

We can merge the datasets using a command of the form:

m=merge(hun_2011racestats,hun_2011qualistats,by="driverNum")

The by parameter identifies which column we want to merge the tables around. (If the two datasets have different column names, you need to set by.x= and by.y= to specify the column from each dataset that is the focus for merging).

So for example, in the simple case where we are merging around two columns of the same name in different tables:

After the merge, column names for columns from the first table have the .x suffix added, and from the second, .y.

We can then export the combined dataset as a CSV file:

write.csv(m, file = "demoMerge.csv")

[If you want to extract a subset of the columns, specify the required columns in an R command of the form: m2=m[c(“driverNum”,”name.x”,”ultimate.x”,”ultimate.y”)] See also: R: subset]

Simples;-)

PS in the above example, the merge table only contains merged rows. If there are elements in the common column of one table, but not the other, that partial data will not be included in the merged table. To include all rows, set all=TRUE. To include all rows from the first table, but not unmatched rows from the second, set all.x=TRUE; (the cells from columns in the unmatched row of the second table will be set to NA). (all.y=TRUE is also legitimate). From the R merge documentation:

In SQL database terminology, the default value of all = FALSE [the default] gives a natural join, a special case of an inner join. Specifying all.x = TRUE gives a left (outer) join, all.y = TRUE a right (outer) join, and both (all=TRUE a (full) outer join. DBMSes do not match NULL records, equivalent to incomparables = NA in R.

For other ways of combining data from two different data sets, see:

– Merging Datasets with Common Columns in Google Refine

– A Further Look at the Orange Data Playground – Filters and File Merging

– Merging CSV data files with Google Fusion Tables

If you know of any other simple ways of joining data files about a common column, please reveal all in the comments:-)

SFTW: How to scrape webpages and ask questions with Google Docs and =importXML

Image by dullhunk on Flickr

Here’s another Something for the Weekend post. Last week I wrote a post on how to use the =importFeed formula in Google Docs spreadsheets to pull an RSS feed (or part of one) into a spreadsheet, and split it into columns. Another formula which performs a similar function more powerfully is =importXML.

There are at least 2 distinct journalistic uses for =importXML:

- You have found information that is only available in XML format and need to put it into a standard spreadsheet to interrogate it or combine it with other data.

- You want to extract some information from a webpage – perhaps on a regular basis – and put that in a structured format (a spreadsheet) so you can more easily ask questions of it.

The first task is the easiest, so I’ll explain how to do that in this post. I’ll use a separate post to explain the latter.

Converting an XML feed into a table

If you have some information in XML format it helps if you have some understanding of how XML is structured. A backgrounder on how to understand XML is covered in this post explaining XML for journalists.

It also helps if you are using a browser which is good at displaying XML pages: Chrome, for example, not only staggers and indents different pieces of information, but also allows you to expand or collapse parts of that, and colours elements, values and attributes (which we’ll come on to below) differently.

Say, for example, you wanted a spreadsheet of UK council data, including latitude, longitude, CIPFA code, and so on – and you found the data, but it was in XML format at a page like this: http://openlylocal.com/councils/all.xml

To pull that into a neatly structured spreadsheet in Google Docs, type the following into the cell where you want the import to begin (try typing in cell A2, leaving the first row free for you to add column headers):

=ImportXML(“http://openlylocal.com/councils/all.xml”, ”//council”)

The formula (or, more accurately, function) needs two pieces of information, which are contained in the parentheses and separated by a comma: a web address (URL), and a query. Or, put another way:

=importXML(“theURLinQuotationMarks”, “theBitWithinTheURLthatYouWant”)

The URL is relatively easy – it is the address of the XML file you are reading (it should end in .xml). The query needs some further explanation.

The query tells Google Docs which bit of the XML you want to pull out. It uses a language called XPath – but don’t worry, you will only need to note down a few queries for most purposes.

Here’s an example of part of that XML file shown in the Chrome browser:

The indentation and triangles indicate the way the data is structured. So, the <councils> tag contains at least one item called <council> (if you scrolled down, or clicked on the triangle to collapse <council> you would see there are a few hundred).

And each <council> contains an <address>, <authority-type>, and many other pieces of information.

If you wanted to grab every <council> from this XML file, then, you use the query “//council” as shown above. Think of the // as a replacement for the < in a tag – you are saying: ‘grab the contents of every item that begins <council>’.

You’ll notice that in your spreadsheet where you have typed the formula above, it gathers the contents (called a value) of each tag within <council>, each tag’s value going into their own column – giving you dozens of columns.

You can continue this logic to look for tags within tags. For example, if you wanted to grab the <name> value from within each <council> tag, you could use:

=ImportXML(“http://openlylocal.com/councils/all.xml”, ”//council//name”)

You would then only have one column, containing the names of all the councils – if that’s all you wanted. You could of course adapt the formula again in cell B2 to pull another piece of information. However, you may end up with a mismatch of data where that information is missing – so it’s always better to grab all the XML once, then clean it up on a copy.

If the XML is more complex then you can ask more complex questions – which I’ll cover in the second part of this post. You can also put the URL and/or query in other cells to simplify matters, e.g.

=ImportXML(A1, B1)

Where cell A1 contains http://openlylocal.com/councils/all.xml and B1 contains //council (note the lack of quotation marks). You then only need to change the contents of A1 or B1 to change the results, rather than having to edit the formula directly)

If you’ve any other examples, ideas or corrections, let me know. Meanwhile, I’ve published an example spreadsheet demonstrating all the above techniques here.

The style challenge

Time was when a journalist could learn one or two writing styles and stick with them.

They might command enormous respect for being the best at what they did. But sometimes, when that journalist moved to another employer, their style became incongruous.

And they couldn’t change.

This is the style challenge, and it’s one that has become increasingly demanding for journalists in an online age. Because not only must they be able to adapt their style for different types of reporting; not only must they be able to adapt for different brands; not only must they be able to adapt their style within different brands across multiple media; but they must also be able to adapt their style within a single medium, across multiple platforms: Twitter, Facebook, blogs, Flickr, YouTube, or anywhere else that their audiences gather.

Immersion and language

Style is a fundamental skill in journalism. It is difficult to teach, because it relies on an individual immersing themselves in media, and doing so in a way that goes beyond each message to the medium itself.

This is why journalism tutors urge their students so strongly to read as many newspapers as they can; to watch the news and listen to it, obsessively.

Without immersion it is difficult to speak any language.

Now. Some people do immerse themselves and have a handle on current affairs. That’s useful, but not the point. Some do it and gain an understanding of institutions and audiences (that one is left-leaning; this one is conservative with a small c, etc.). This is also useful, but also not the point.

The point is about how each institution addresses each audience, and when.

Despite journalists and editors often having an intuitive understanding of this difference in print or broadcast, over the last decade they’ve often demonstrated an inability to apply the same principles when it comes to publishing online.

And so we’ve had shovelware: organisations republishing print articles online without any changes.

We’ve had opinion columns published as blogs because ‘blogs are all about opinion’.

And we’ve had journalists treating Twitter as just another newswire to throw out headlines.

This is like a person’s first attempt at a radio broadcast where they begin by addressing “Hey all you out there” as if they’re a Balearic DJ.

Good journalists should know better.

Style serves communication

Among many other things a good journalism or media degree should teach not just the practical skills of journalism but an intellectual understanding of communication, and by extension, style.

Because style is, at its base, about communication. It is about register: understanding what tone to adopt based on who you are talking to, what you are talking about, the relationship you seek to engender, and the history behind that.

As communication channels and tools proliferate, we probably need to pay more attention to that.

Journalists are being asked to adapt their skills from print to video; from formal articles to informal blog posts; from Facebook Page updates to tweets.

They are having to learn new styles of liveblogging, audio slideshows, mapping and apps; to operate within the formal restrictions of XML or SEO.

For freelance journalists commissioning briefs increasingly ask for that flexibility even within the same piece of work, offering an extra payments for an online version, a structured version, a podcast, and so on.

These requests are often quite basic – requiring a list of links for an online version, for example – but as content management systems become more sophisticated, those conditions will become more stringent: supplying an XML file with data on a product being reviewed, for example, or a version optimised for search.

What complicates things further is that, for many of these platforms, we are inventing the language as we speak it.

For those new to the platform, it can be intimidating. But for those who invest time in gaining experience, it is an enormous opportunity. Because those who master the style of a blog, or Facebook, or Twitter, or addressing a particular group on Flickr, or a YouTube community, put themselves in an incredible position, building networks that a small magazine publisher would die for.

That’s why style is so important – now more than ever, and in the future more than now.

The style challenge

Time was when a journalist could learn one or two writing styles and stick with them. They might command enormous respect for being the best at what they did. But sometimes, when that journalist moved to another employer, their style became incongruous. And they couldn’t change.

This is the style challenge, and it’s one that has become increasingly demanding for journalists in an online age.

Because not only must they be able to adapt their style for different types of reporting; not only must they be able to adapt for different brands; not only must they be able to adapt their style within different brands across multiple media; but they must also be able to adapt their style within a single medium, across multiple platforms: Twitter, Facebook, blogs, Flickr, YouTube, or anywhere else that their audiences gather. Continue reading